I picked up How Will You Measure Your Life? by Clayton Christensen during the Easter holidays. One of those books you grab thinking it'll be a light read for the break and then you find yourself still underlining things late into the evening. It doesn't let go easily.

There is a version of AI use that makes you faster without costing you anything. You understand the architecture, you know what the code should do, and you use the model to handle the boilerplate, draft the test you already know how to write, translate the regex you never remember. That is tool use. The understanding lives in your head and the model is saving your fingers.

There is another version where you use AI to bypass the step where you struggle with the problem. You paste in the error or use MCP to fetch it from Sentry, model fixes (?) and you move on. This is outsourcing.

The output looks the same from the outside: the bug gets fixed, the PR ships. But something different happened inside the engineer's head. In the first case, they understood. In the second, they borrowed understanding, and borrowed understanding is fine once but accumulates into a problem.

A stack trace is not just an error message. It is a data structure that describes precisely what went wrong and where, in what order, through which layers. Reading one fluently takes years of practice, not becuase the format is complicated, but because fluency comes from pattern-matching, and pattern-matching comes from repetition. You need to have seen enough of them to notice when something is off, when the call depth is wrong, when the error at the top is a symptom and the real cause is three frames down. That skill is built by reading them slowly, sometimes wrong.

You cannot outsource that reading and still build the skill.

The same goes for debugging under pressure, for building a mental model of an unfamiliar codebase, for estimating whether a refactor is safe. These are not things you aquire from a course, they accumulate from doing, from being wrong, from eventually being right for reasons you can actually explain.

One of the stories in the book is about Dell and Asus. Dell, trying to trim costs, began outsourcing components to Asus, first the simple boards and then more complex assemblies. Each individual decision made economic sense and margins improved, the decision looked correct on every quarterly report. Then Asus launched its own laptops. They had learned everything Dell knew, Dell had taught them one outsourced capability at a time.

Christensen uses this story to make a point that cuts far deeper than supply chains. When you delegate a capability you lose it, not immediately but gradually and invisibly, and each step feels rational while the sum of them leads somewhere you didn't intend.

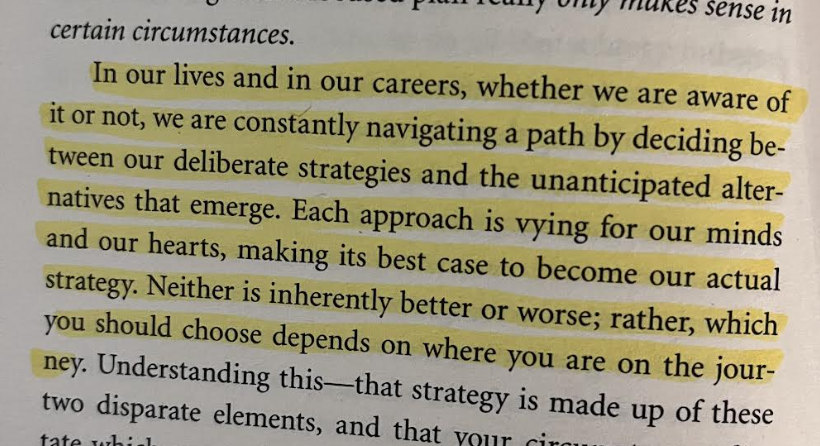

He also wrote something that has stayed with me:

Most engineers didn't deliberately decide to outsource their thinking to AI, it emerged. The tool appeared, it was usefull, and bit by bit it took over steps that used to belong to us. That is an emergent strategy. Christensen's point is that at some point you have to decide, consciously, what your actual strategy is, what you are building and what you are keeping.

Christensen spent his career studying why successful companies fail. Not bad companies but great ones, with intelligent people making individualy rational decisions. He kept finding the same pattern: the choices that eventually killed them looked correct at the time. The problem was not the decision, it was the measurement, they were optimizing the right thing on the wrong horizon. Near the end of his life, he started asking his students to apply that framework to themselves. The question is not about salaries or titles, it is about the person you are becoming through the daily choices that seem too small to think about.

If you consistently use AI to skip the step where you have to think, you are not saving time, you are making the Dell decision. Every single one of those individual choices looks fine in the moment and the cumulative effect is capability you never finished building, or capability you built and then handed away.

I am not worried about AI making engineers obsolete, that framing is a distraction. What I think about is the engineer who at 40 has fifteen years in the industry but cannot hold a complex system in their head long enough to reason about it, who cannot debug something genuinely new without a chatbot, who cannot make an architectural arguement on a whiteboard without checking what the model thinks first.

The machines are getting better at fixing things and the question is whether you still know how to understand them.

The failures are the curriculum. The error messages are the syllabus.